What a gap analysis usually misses — findings from 20,000+ strategic plans, two real turnarounds, and the four mistakes we see most often.

Most gap analyses get the gap wrong.

Not because the math is hard — the math is the easy part. Current state, target state, subtract. Done.

The gap analysis gets it wrong because teams measure the gap they can see, then build a plan to close the gap they can see, and never check the gap that’s actually killing the strategy. Across 20,000+ strategic plans on the ClearPoint platform, we see the same pattern repeat. Projects finish. Initiatives complete. Status meetings happen on Tuesday like they’re supposed to. And the goals those projects were meant to serve drift further from target every quarter.

This article is for the people running that meeting. We’re going to do three things. Walk through the four steps of a gap analysis the way they should be done. Surface the proprietary numbers from our platform that change how you’ll think about your own gaps. And show two real ClearPoint customers — one healthcare, one local government — who ran a gap analysis, found the wrong gap first, and only closed the right one after they re-baselined.

No hypothetical bank. No imaginary soup kitchen. Just the data and the work.

What is a gap analysis?

A gap analysis is the discipline of comparing where you are to where you said you’d be — then asking, with some honesty, why the difference exists.

That’s it. Three questions:

- Where are we now?

- Where did we say we’d be?

- What is the gap actually telling us?

The third question is where most teams stop short. They treat the gap as a target to close. We’ve watched 20,000+ plans run, and the teams who close gaps reliably treat the gap as a diagnosis — a signal that something upstream is wrong with the plan, the owners, the metric, or the assumptions baked in years ago.

The rest of this article is about reading that diagnosis well.

In Practice. Across 1.4 million measure-status data points on the ClearPoint platform, only 50.1% of KPIs are on-track at any given moment. State governments run the widest gap (76% of measures off-target). Higher Education sits second at 64%. Healthcare follows at 55%. The “average gap” you see in textbooks is closer to a coin flip than a benchmark.

What 20,000+ strategic plans reveal about the gaps you don’t see

Before we get to the four steps, three findings from our platform that change the framing.

Finding 1 — Goals fail more than projects

When we look across 254,302 strategic objectives, only 39.3% are on-track. The rest are yellow or red. That’s a 60.7% goal-level gap, system-wide.

Now compare initiatives — the projects organizations run to close goal gaps. Across 279,600 initiatives, 77.6% are on-track.

Read that again. Projects almost always finish. Goals almost never do.

This is the gap nobody plans for. Teams charter the project, work the project, ship the project — and the goal it was meant to serve still isn’t where the plan said it would be. If your gap analysis stops at “are we delivering the projects?” — yes, you probably are. That’s not the gap that’s hurting you.

Finding 2 — The gap size depends almost entirely on what you’re tracking

Sector matters. A lot.

A state government running a gap analysis on its KPIs is staring at a 76% off-target rate before it even starts. A K-12 district is at 30%. The four-step template is the same. The conclusions are not.

Finding 3 — 81% of assigned metric owners never update their data

This is the finding that explains the other two. Across the platform, 81.1% of users assigned as metric owners have never logged in to update their own data. We call them phantom owners. Their name sits next to a measure. The measure goes stale. The dashboard turns yellow then red. Nobody notices because the owner was never going to notice.

Most gap analyses we’ve seen identify the wrong root cause because of this. The team assumes the gap is a strategy problem. It’s almost always an ownership problem. Five whys often deserve a sixth: why is the owner of this measure not the person who can move it?

We’ll come back to this in the mistakes section.

How to conduct a gap analysis: 4 steps that actually close the gap

The structure works. The execution is where teams lose. Here’s the four-step template anchored to what the data shows.

Step 1 — Anchor the current state to a metric you can defend

Pick one metric per gap analysis. One. The temptation is to run the analysis across every department and every objective, and the result is a 40-tab Excel file nobody reads. ClearPoint platform data shows the average plan tracks 7.4 strategic objectives (median 6) — and most of them sit on the same dashboard, untouched, between quarterly reviews.

Defend the metric like this:

- It is owned by someone who controls the inputs.

- It updates at least monthly.

- A real person can answer “what would it take to move this by 10%?”

If you can’t pass those three tests, you don’t have a metric. You have a placeholder.

Are you tracking the right strategic objectives? Claim your free library of 56 strategic objectives.

Step 2 — Set the target on a date, not a vibe

The future state needs three components: the value, the date, and the assumption that justifies the trajectory. Most plans skip the third.

A target like “30% growth by 2029” is not a target. “30% growth by Q4 2029, assuming we close two enterprise deals per quarter and our churn stays under 5%” is. The second version tells you exactly what to look for when the gap opens up.

This matters for the analysis itself. The median ClearPoint project takes 10.94 months. If your target window is shorter than that and your closing plan requires new initiatives, the gap analysis is already wrong about timing.

Step 3 — Identify the gap, then ask what kind of gap it is

There are four kinds. They look identical on a chart. They require different fixes.

Gap typeWhat it looks likeWhat it actually isPerformance gapMetric is below targetThe work isn’t producing the resultTracking gapMetric hasn’t updatedPhantom owner — see Finding 3Definition gapMetric moved, target didn’tThe target was set against assumptions that no longer applyStrategy gapProjects deliver, goal still missesThe strategy itself is wrong, not the execution

The 5 Whys exercise — yes, the same one you’ve heard about — works as a triage. Run it once. The answer at “Why #5” tells you which type of gap you’re actually looking at.

The most common “Why #5” answer we see across the platform: the plan inherited the org chart instead of being designed around it. Cities, hospitals, banks — same pattern. A goal gets assigned to a department because the department’s name matches the goal’s keyword. Nobody checked whether that department could actually move the metric.

That’s the diagnosis the gap was hiding.

Step 4 — Build the closing plan around ownership, not action items

Once you know what kind of gap you have, the closing plan is short:

- Performance gap? Add an initiative. Set a 90-day check-in. The 77.6% project-completion rate works in your favor here — projects, on average, finish.

- Tracking gap? Reassign ownership before adding any new initiatives. There is no point launching a project to move a metric whose owner doesn’t see it.

- Definition gap? Re-baseline. Move the target. Document the assumption that changed. Most teams find this politically uncomfortable; it’s almost always the right move.

- Strategy gap? Pause the projects. Run a strategy review. This is rare. When it happens, it’s the only one of the four where doing more of what you were doing makes things worse.

Download our free eBook on 8 effective strategic planning templates.

The four gap analysis mistakes we see most often

We get asked to look at gap analyses every week. These four show up over and over.

Mistake 1 — Running the analysis at the project level instead of the goal level

Teams ask “are our projects on-track?” The answer is almost always yes. 77.6% on-track on the ClearPoint platform. Then they wonder why the strategy is drifting. Run the gap analysis on the goal, not the work that’s supposed to serve it.

Mistake 2 — Confusing a delay with a gap

A project that’s running 60 days late is a delay. A goal whose trend line is moving away from target is a gap. The delay can be project-managed. The gap has to be diagnosed. Treating one as the other costs months.

Mistake 3 — Never re-baselining

Targets set in 2022 against 2022 assumptions are still sitting in plans today. The gap they describe is partly real and partly an artifact of stale assumptions. We see this most in healthcare and higher education, where the post-COVID re-planning never fully landed. If the gap on a measure has been wider than 30% for four consecutive quarters, the issue may not be the work — it may be the target.

Mistake 4 — Assigning owners who can’t update

This is the phantom-owner problem. 81.1% of assigned owners never log in. A gap analysis that identifies a closing plan but assigns it to one of those owners is launching a plan into a void. Before you finalize the closing actions, check the last-update date on the measure. If it’s “never,” the gap is not where you think it is.

Two real gap analyses, two real turnarounds

Replacing the hypothetical bank and the imaginary soup kitchen — here’s what this actually looks like in our customer base.

San Juan Regional Medical Center — the reporting gap that wasn’t a strategy gap

San Juan Regional is a 198-bed Level III trauma center in Four Corners, New Mexico. Their gap analysis started simple: the leadership team needed faster, more reliable reporting against the hospital’s Balanced Scorecard. The “current state” was a half-day per management report, with departments tracking metrics in Excel and printing them.

The team initially assumed the gap was a strategy execution gap. After running the diagnosis, it was a tracking gap. The previous performance management software had been reduced to a data-storage tool. Reports couldn’t be tailored. Metrics that had once mattered were buried, no longer reviewed, no longer current.

The fix wasn’t more strategy. It was migrating to a system that gave each department its own scorecard tied to the master Balanced Scorecard, plus the ability to attach analysis fields and audit-traceable evidence.

The outcome ClearPoint published with San Juan: an 89% reduction in reporting time for management reports, and an 83% improvement in build time for new reporting requirements. Their DNV stroke accreditation audit, in the words of the hospital’s quality control manager, “went flawlessly.” The strategy hadn’t been broken. The instrument measuring it had been.

Source: ClearPoint case study — San Juan Regional Medical Center

City of Durham, NC — the gap analysis they had to run twice

Durham is the fourth-largest city in North Carolina, ~283,000 residents. The city launched its first formal strategic plan in 2011, on ClearPoint. By 2012 — one year in — most departments had reverted to Excel. A second gap analysis was run, and the team found something uncomfortable: the gap wasn’t in the strategy. It wasn’t even in the software. It was in ownership.

There was no clear accountability for performance measures. Updates were optional in practice. Without ownership, the plan went stale on contact with operations.

In 2014, Durham re-launched. This time, department directors were given explicit responsibility for their measures. Performance was linked to budget requests and employee evaluations. The city went from running on 22 Excel files with over 100 tabs to a single ClearPoint instance shared with Durham County for joint city-county initiatives.

The outcomes the city published:

- Reduced background-check staffing from four positions without impact on community safety (because the data showed the workload didn’t justify the headcount).

- Avoided hiring 4–5 communication officers per year after data showed the city was already hitting a 95% emergency response level.

- A joint city-county CPR/AED training program that has trained over 1,700 Durham Public Schools students.

Durham’s strategic plan project manager Jay Reinstein, on the record: “With data now driving decision making, it’s all about results.”

The lesson worth repeating: the first gap analysis Durham ran was correct on the math and wrong on the diagnosis. The second one — the one that worked — looked past the metrics and into who was responsible for moving them.

Source: ClearPoint case study — City of Durham

Closing the gap with ClearPoint

A gap analysis produces three things at minimum: a defended metric, a target with a date, and a closing plan with assigned ownership. ClearPoint’s job, after the analysis, is to make sure those three things don’t quietly fall apart over the eleven months the work takes.

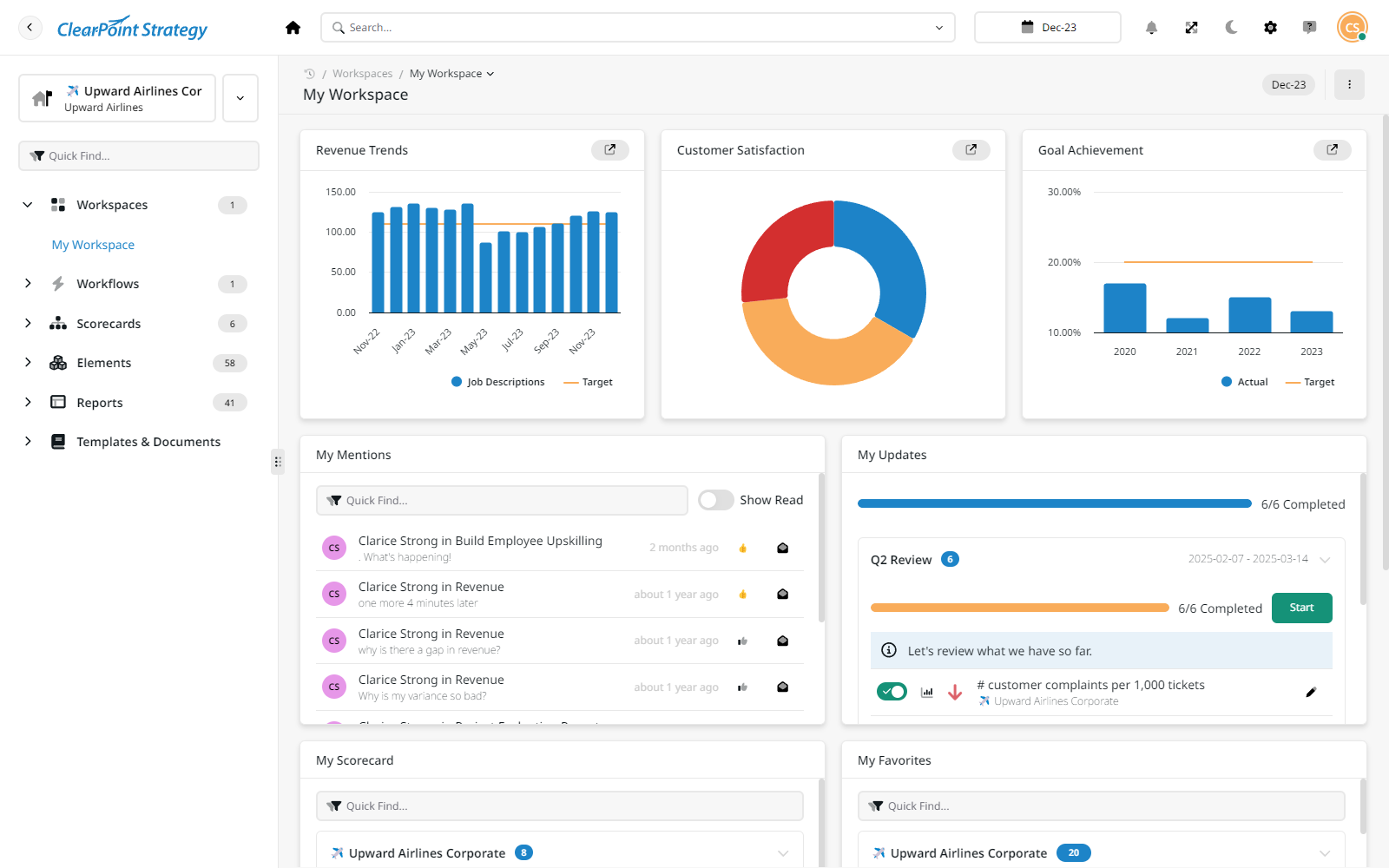

Here’s what that looks like in the platform, in the order it actually happens after a gap analysis ends.

Wire each gap to the metric and the goal it serves. Every gap identified gets linked, in ClearPoint, to the measure it tracks and the strategic objective it supports. When the measure updates, the goal updates, and the dashboard reflects the closing trajectory in real time. You stop running the gap analysis quarterly because the gap is reported daily.

Make ownership visible — and make non-update visible too. Phantom owners cost real money. ClearPoint surfaces the last-update date on every measure, sends automated reminders to assigned owners on the cadence you set, and escalates when measures go stale. Across the platform, districts that wire up workflow automation evaluate 45.7% of their measures versus 32.1% for those that don't — a ~14-point lift just from making non-update visible.

Run the closing plan as projects, with the same rigor as the goal. Initiatives in ClearPoint live alongside the measures they’re meant to move. Project dashboards, Gantt charts, RAG status, and dependency tracking — all in the same view. When an initiative is on-track but the measure isn’t, the system shows you the gap between the two, which is the diagnostic Step 3 was about.

Report once, distribute everywhere. Reporting is what kills follow-through. ClearPoint automates the assembly of monthly, quarterly, and annual scorecards from the same underlying data — different audiences, same source of truth. San Juan’s 89% reduction in reporting time is not a marketing number. It’s what happens when the report stops being assembled by hand.

Re-baseline when the data tells you to. When a measure has been wider than 30% off-target for four consecutive quarters, that’s not a project problem — it’s a target problem. ClearPoint’s trend analysis surfaces these cases, which is when you re-run the gap analysis on the target itself, not on the work.

Book your free 1-on-1 demo with ClearPoint Strategy.

See your gap analysis through

A gap analysis is not a document. It is a question you keep asking until the answer stops surprising you. Most plans don’t fail because the gap was too wide. They fail because nobody noticed the gap had moved.

The work is to stay honest about which gap you’re actually closing.

Frequently Asked Questions

Who should run a gap analysis?

A gap analysis works best when the people who own the metrics are in the room. That usually means department heads plus one strategy lead — small enough to make decisions, senior enough to commit to the closing actions. Across 20,000+ ClearPoint plans, the analyses that close gaps share one thing: the people identifying the gap are the same people who can move it.

What are the four types of strategic gap?

Performance, tracking, definition, and strategy. A performance gap means the work isn’t producing the result. A tracking gap means the metric isn’t updated (81% of assigned owners on the ClearPoint platform never log in). A definition gap means the target was set against assumptions that no longer apply. A strategy gap means the projects are delivering but the goal is still wrong. Each one looks identical on a chart and requires a different fix.

How often should we rerun a gap analysis?

Once per quarter as a formal exercise, but the underlying gap should be visible daily. ClearPoint platform data shows the median strategic project takes 10.94 months — long enough that quarterly reviews can lag the work. The teams who close gaps reliably treat the gap analysis as a continuous reading, not an annual event.

What is the most common mistake in gap analyses?

Running the analysis at the project level instead of the goal level. Across the ClearPoint platform, 77.6% of initiatives are on-track but only 39.3% of the strategic objectives those initiatives serve are. Teams chase project completion and miss the gap between projects-delivering and goal-still-failing. That’s the gap most plans don’t catch in time.

What does a gap analysis template need to include?

At minimum: one defended metric, a target with a date and the assumption behind it, the four-type gap diagnosis (performance, tracking, definition, strategy), the 5-whys exercise to surface root cause, and a closing plan with explicit ownership tied to whoever controls the inputs. Anything less and the template becomes an exercise that doesn’t close the gap it identified.

.svg)

.webp)